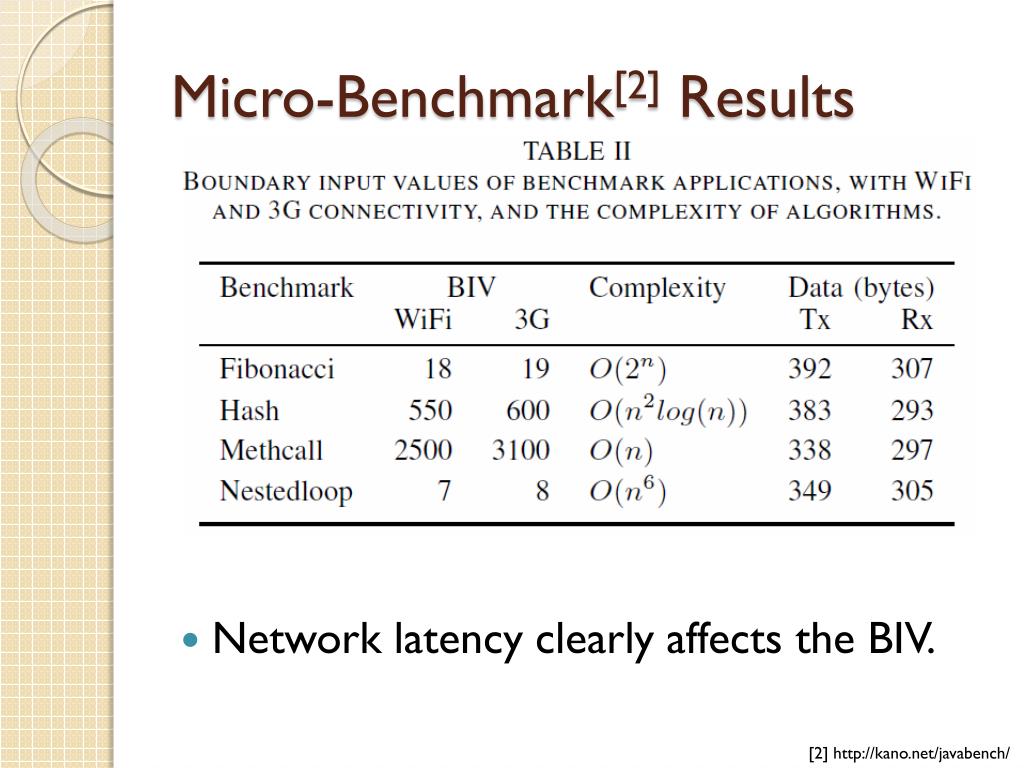

In an occasion, A performed better than B when I measured A before B, while the trend got reversed when I placed B before A, or something like that (maybe the opposite? I don't recall).For a similar reason, microbenchmark results can unfairly favor algorithms with a lot of manually unrolled loops and other things like that, which, inside real-world programs, can imply inferior use of instruction cache.For a similar reason, microbenchmark results can unfairly favor algorithms relying on a large table of static data, which, inside real-world programs, can imply inferior use of data cache.But looping over it (especially with the same input) can help CPU to make better and better branch predictions which can skew the result in favor of algorithms with a lot of branchings. If the algorithm you're measuring is so fast, you can't reliably measure the time of a single run.Depending on where the code for the algorithms is put in the project, the compiler can make different inlining decisions which can have a cascading effect on the end performance.Or at least theoretically, pseudorandom generators have certain statistical flaws and that might interfere with the result.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed